Iran’s War Has a Second Battlefield Now: artificial intelligence

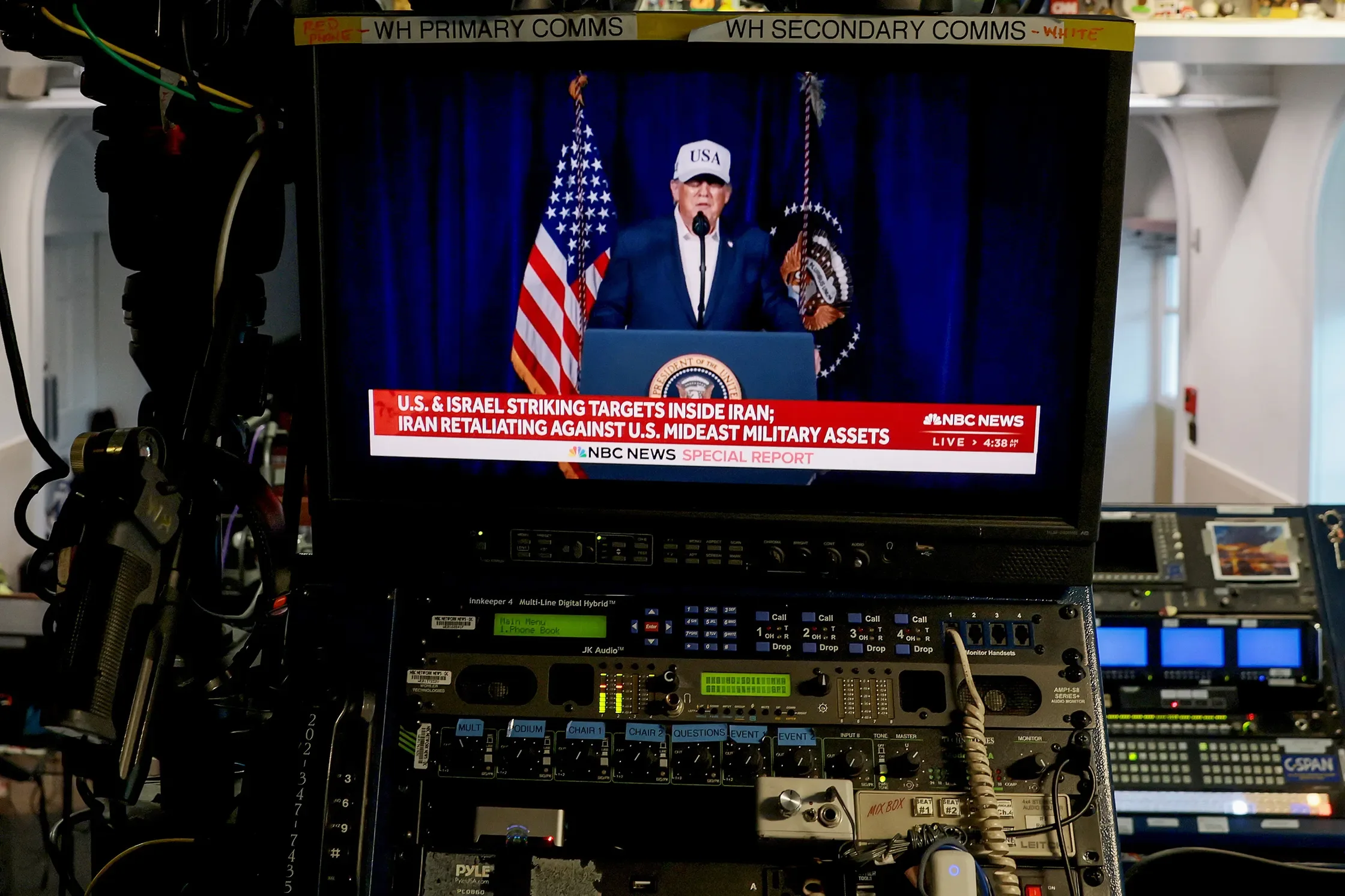

By Monday, March 2, 2026, the Iran conflict had already become two wars running in parallel. There’s a kinetic one with airstrikes, missiles, drones. Then there’s an informational one with viral clips, recycled footage, AI-generated fabrications, and influencer accounts “reporting” victories that never happened.

That second war is why you can watch a video that *looks* like Iran destroying a U.S. ship or a drone smashing into a building in Tel Aviv, then search for corroboration and find… nothing.

In many cases, the nothing is the point.

This conflict is real, fast-moving, and heavily documented by major outlets. Reuters and others have reported U.S.-Israeli strikes on Iran and rapid escalation across the region, including Iranian missile and drone retaliation and confirmed casualties in Israel and elsewhere.

At the same time, fact-checkers and reporters are documenting a flood of misattributed clips and outright fake “battle footage” spreading on X and other platforms, especially dramatic “proof” videos that show:

A U.S. aircraft carrier (often named the USS Abraham Lincoln) on fire or sunk — viral, widely shared, and not supported by credible reporting, with the U.S. military publicly denying the claim.

There was also A U.S. jet being downed over Iran — circulating footage that fact-checkers say is pulled from a military-themed video game, repackaged as real.

We even found A broader wave of old videos reused as “breaking news,” AI-generated/altered visuals, and game clips framed as frontline footage, amplified by high-reach accounts.

So if you’re seeing sensational clips—“U.S. craft destroyed,” “carrier sunk,” “Tel Aviv flattened”—and can’t find reputable confirmation, you’re running into a battlespace where deception is cheap and attention is the prize.

Is Iran doing it intentionally? Sometimes. But not only Iran.

It’s tempting to imagine a single mastermind behind the fog, but reality is messier—and more dangerous.

Yes, states and aligned networks do run information ops. But the flood is also driven by:

Partisan and diaspora ecosystems trying to “win the narrative” in real time

Engagement merchants monetizing chaos (especially on platforms where virality can pay)

Copycat accounts recycling old footage because it’s fast, emotional, and hard to disprove instantly

That’s why you’ll see false claims spreading even when they don’t cleanly serve Tehran’s interests.

In 2026, propaganda is a central part of the market.

How AI changes this war (and why it feels like you can’t trust your eyes)

Generative AI is cheapening “evidence.” It’s now trivial to produce plausible-looking imagery, fake voice clips, and “breaking news” packages that feel like they came from a newsroom.

And even when the clip isn’t fully AI-generated, AI tools still accelerate the assembly line: editing, translating, captioning, and optimizing misinformation for maximum reach.

Researchers and monitoring groups have been warning that synthetic media doesn’t just confuse crises, but can also accelerate them.

The social media timeline has become a weapon system. In older wars, propaganda was slower: posters, radio segments, staged press conferences.

In 2026, a single account can upload a fabricated strike video, attach the right caption, and let the platform’s engagement incentives do the rest.

If the account is plugged into amplification networks, whether ideological communities, coordinated repost rings, or paid “blue-check” virality, millions can see the clip before any serious verification happens.

This conflict is being flooded with misleading and false posts: misattributed footage, altered visuals, and even video game clips presented as real battlefield documentation.

The deeper objective isn’t always to convince the public of one specific lie. Even when a fake is debunked, it can still do useful work for whoever benefits from confusion: it can make an enemy look weak, inflate perceived capabilities (“we can hit anything”), exhaust the public’s ability to tell what’s real, and nudge decision-makers toward overreaction.

That’s why this feels like a new front tier of war. AI plus platform mechanics has turned propaganda into something closer to artillery. This war is now about shared reality—over what counts as proof, and how quickly a crowd can be made to believe it.